Artificial intelligence is no longer a futuristic concept it’s a present-day necessity. From startups building intelligent apps to enterprises deploying large-scale machine learning systems, the demand for scalable AI infrastructure has never been higher. Yet, with this demand comes a familiar challenge: complexity.

Between managing GPUs, optimizing inference workloads, handling unpredictable costs, and maintaining uptime, many teams find themselves spending more time on infrastructure than innovation. That’s where DigitalOcean’s Gradient™ AI Inference Cloud steps in offering a streamlined, developer-friendly platform designed to make scaling AI simple, predictable, and efficient.

A New Approach to AI Infrastructure

DigitalOcean has long been known for simplifying cloud computing, and Gradient™ AI continues that tradition. Built specifically for modern AI workloads, the platform provides everything developers need to train, fine-tune, and deploy models without the operational overhead typically associated with AI infrastructure.

Instead of stitching together multiple services, Gradient™ offers a unified environment where compute, storage, and deployment tools work seamlessly together. This allows teams to move quickly from experimentation to production, all within a single ecosystem.

Built for Performance at Scale

Whether you’re running large language models or deploying real-time AI applications, performance matters. Gradient™ AI delivers:

- GPU-powered compute for high-performance training and inference

- High-throughput storage to handle massive datasets

- Scalable runtime environments that grow with your application

With support for advanced hardware like H100 GPUs, developers can run demanding AI workloads efficiently without needing deep infrastructure expertise.

Predictable Pricing, No Surprises

One of the biggest pain points in cloud computing is cost unpredictability. Complex billing models and hidden fees can quickly derail budgets.

DigitalOcean tackles this head-on with transparent, predictable pricing. With generous bandwidth allowances and low-cost inference options, businesses can scale confidently knowing exactly what they’ll pay.

This clarity is especially valuable for startups and growing teams, where cost control is critical to long-term success.

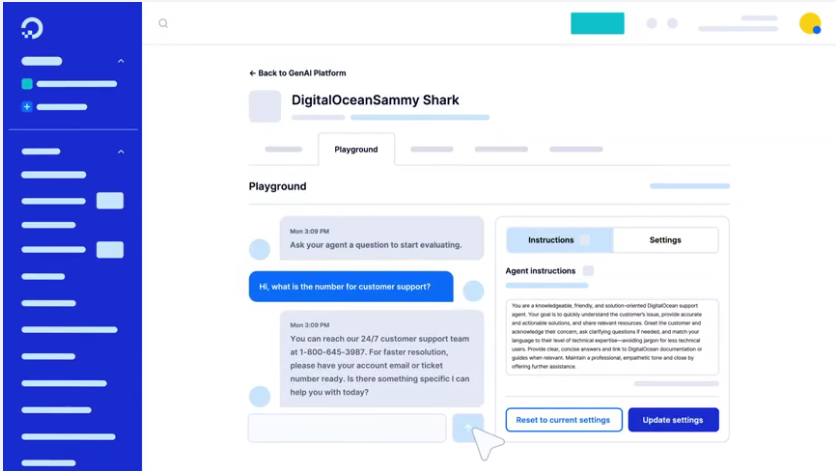

Developer First Experience

Gradient™ AI is designed with developers in mind. From intuitive interfaces to extensive documentation and tutorials, the platform removes friction at every step.

Popular resources include:

- Step-by-step guides for deploying virtual machines

- Tutorials on running AI models and containers (like Docker)

- Real-world examples such as building AI chatbots

The goal is simple: help developers spend less time troubleshooting infrastructure and more time building meaningful AI solutions.

Reliable, Global Infrastructure

When it comes to production AI systems, reliability is non-negotiable. DigitalOcean supports its platform with:

- Globally distributed data centers

- 99.99% uptime SLAs

- Robust networking and security features

This ensures applications remain responsive and available, even under heavy workloads.

Supporting a Growing AI Ecosystem

With over 600,000 customers worldwide, DigitalOcean has become a trusted partner for developers, startups, and businesses building the next generation of applications.

From AI-powered chatbots to data-driven platforms, companies are leveraging Gradient™ AI to bring ideas to life faster and more cost-effectively than ever before.

From “Uhhh” to AI Faster

Perhaps the most compelling aspect of Gradient™ AI is its simplicity. By removing unnecessary complexity, DigitalOcean empowers teams to go from concept to deployment quickly.

No more juggling multiple vendors. No more unexpected costs. Just a clean, powerful platform designed to help you build, scale, and succeed with AI.

Final Thoughts

As AI continues to reshape industries, the need for accessible, scalable infrastructure will only grow. DigitalOcean’s Gradient™ AI Inference Cloud meets this need with a clear promise: powerful AI capabilities without the usual headaches.

For developers and businesses looking to scale AI without complexity, the path forward is becoming clearer and much simpler.